Customizable Erasure Coding (EC) Ratio

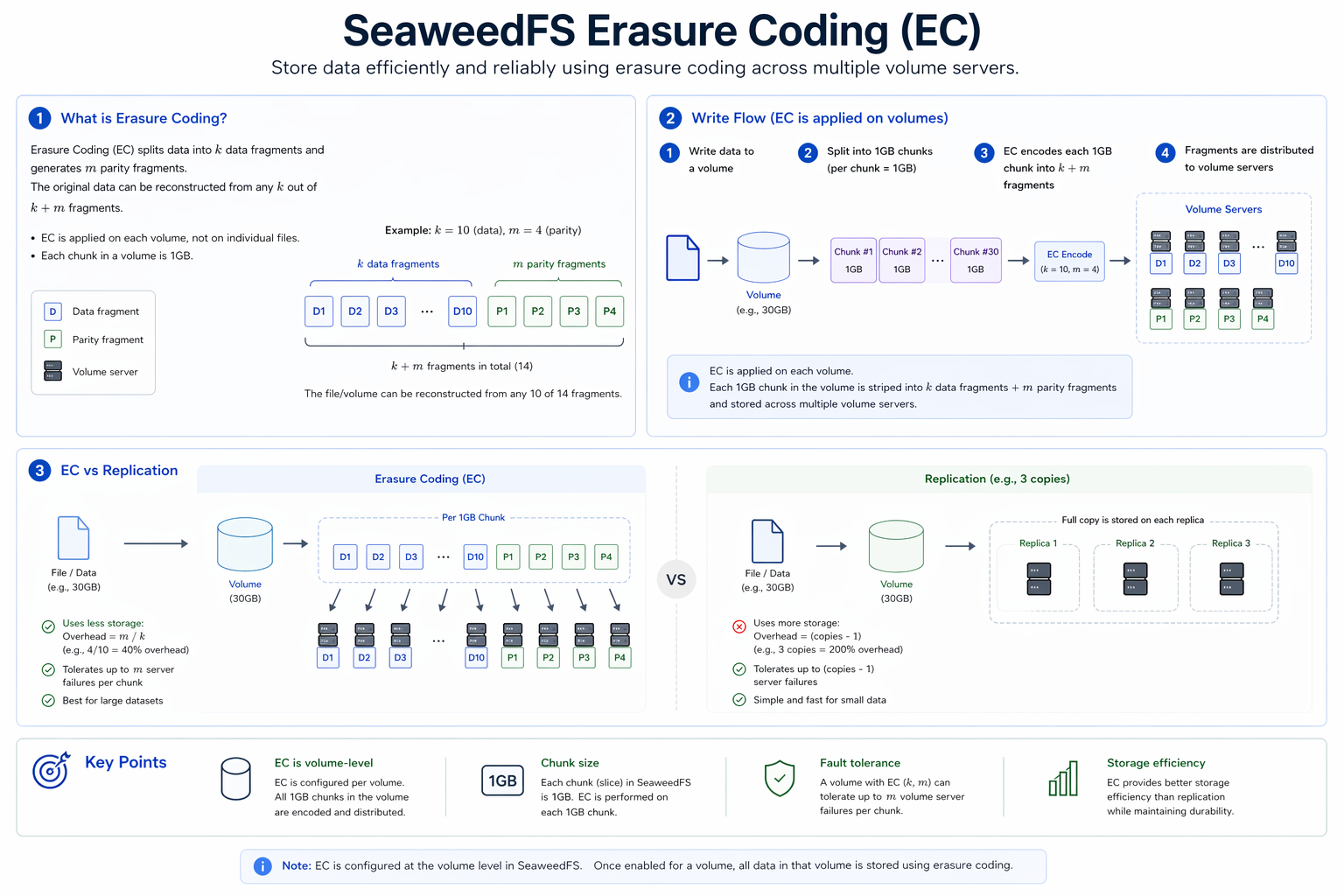

SeaweedFS Enterprise introduces customizable Erasure Coding ratios, enabling organizations to optimize for cost, durability, or performance based on their specific requirements. EC is applied per volume (not per file): each volume is split into k data chunks plus m Reed-Solomon parity chunks and distributed across volume servers, matching (or exceeding) the fault tolerance of 3x replication at a fraction of the storage overhead.

Why SeaweedFS EC is Superior

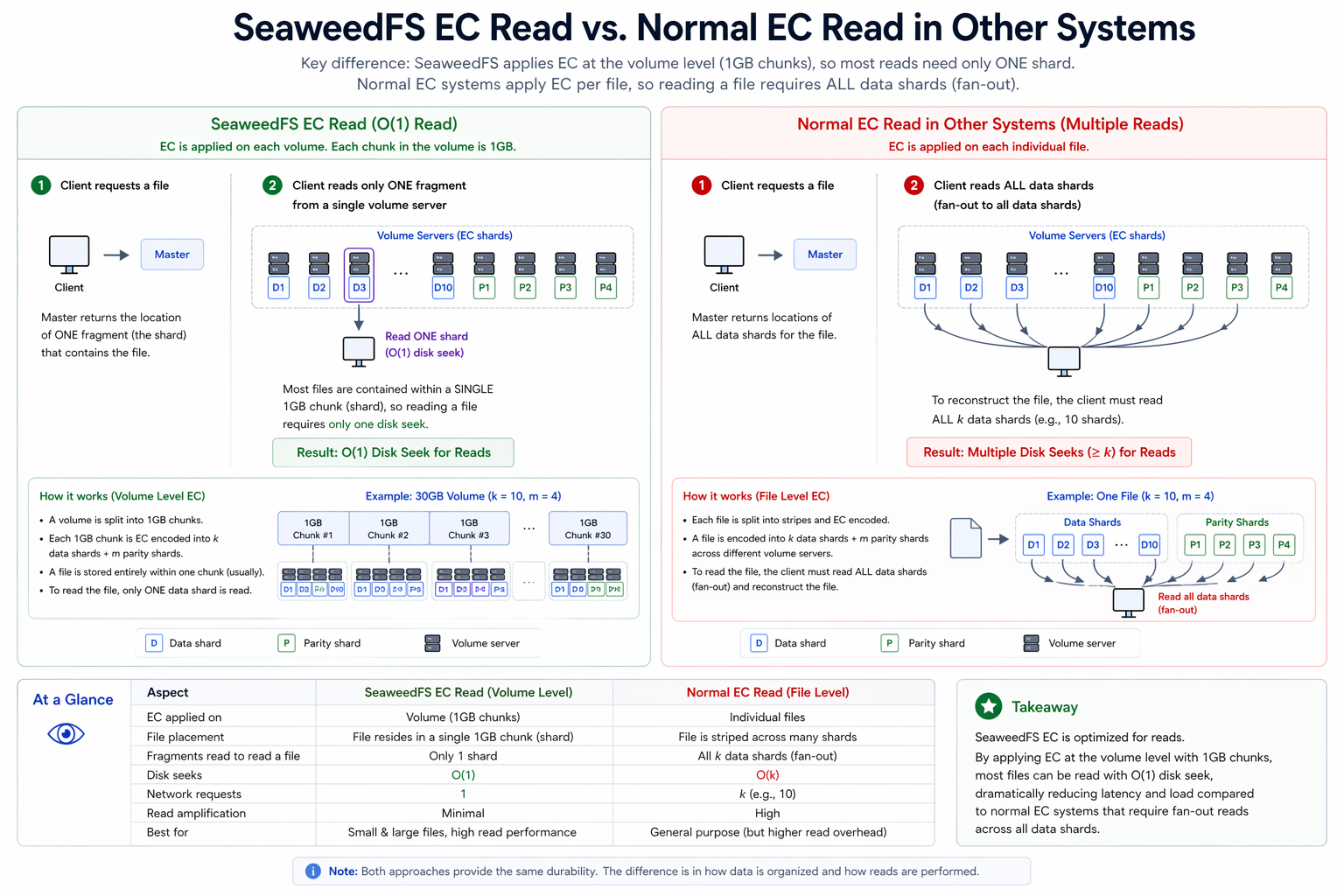

Traditional storage systems face a trade-off: either replicate data multiple times (expensive) or use erasure coding with complex read patterns (slow). SeaweedFS solves both problems:

O(1) Disk Seek for Read Operations

Unlike many EC implementations where reading a single file requires fetching data from multiple shards, SeaweedFS maintains O(1) disk seek for most read operations. A SeaweedFS EC read touches a single chunk on a single server — matching the latency of a non-EC read — while traditional EC systems must fetch and reassemble fragments from k servers for every read.

How it works:

- EC blocks are primarily 1GB in size

- Most files are contained within a single shard

- Only one disk seek is required to read a file

┌───────────────────────────────────────────────────────────────────────┐

│ 30GB Volume │

├───────────┬───────────┬───────────┬───────────┬───────────┬───────────┤

│ 1GB Block │ 1GB Block │ 1GB Block │ ... │ 1GB Block │ 1GB Block │

│ #1 │ #2 │ #3 │ │ #29 │ #30 │

└───────────┴───────────┴───────────┴───────────┴───────────┴───────────┘

│ │ │ │ │

▼ ▼ ▼ ▼ ▼

┌───────────────────────────────────────────────────────────────────────┐

│ Each file typically resides in a SINGLE block → O(1) read │

└───────────────────────────────────────────────────────────────────────┘

No Read Amplification

With large 1GB EC blocks, increasing the number of data shards does not cause read amplification:

| EC Ratio | Data Shards | Parity Shards | Read Pattern |

|---|---|---|---|

| 10+4 (default) | 10 | 4 | O(1) - Single shard read |

| 16+4 (custom) | 16 | 4 | O(1) - Single shard read |

| 20+4 (custom) | 20 | 4 | O(1) - Single shard read |

Key Insight: Each file is contained in a single 1GB block, so reads always hit one shard regardless of total shard count.

Customizable Ratios for Cost Optimization

The default 10+4 configuration provides 1.4x storage overhead with tolerance for 4 shard failures. Enterprise customers can customize this based on their needs:

┌─────────────────────────────────────────────────────────────────┐

│ EC Ratio Comparison │

├─────────────┬─────────────┬─────────────┬───────────────────────┤

│ Ratio │ Overhead │ Tolerance │ Use Case │

├─────────────┼─────────────┼─────────────┼───────────────────────┤

│ 10+4 │ 1.4x │ 4 shards │ Balanced (default) │

│ 16+4 │ 1.25x │ 4 shards │ Cost-optimized │

│ 20+4 │ 1.2x │ 4 shards │ Maximum savings │

│ 10+6 │ 1.6x │ 6 shards │ Maximum durability │

└─────────────┴─────────────┴─────────────┴───────────────────────┘

Robustness Benefits

Custom EC ratios allow you to balance cost and fault tolerance. Higher parity shard counts provide greater robustness:

| EC Ratio | Data Shards | Parity Shards | Total Shards | Overhead | Failure Tolerance | Robustness |

|---|---|---|---|---|---|---|

| 4+1 | 4 | 1 | 5 | 1.25x | 1 shard | ⭐ Low |

| 10+4 | 10 | 4 | 14 | 1.4x | 4 shards | ⭐⭐⭐ Medium |

| 20+5 | 20 | 5 | 25 | 1.25x | 5 shards | ⭐⭐⭐⭐ High |

Example: A 20+5 configuration can tolerate 5 simultaneous shard failures while maintaining data availability, compared to only 1 failure for 4+1. This makes 20+5 significantly more robust for mission-critical data, even though both have similar storage overhead (~1.25x).

How to Choose Your EC Ratio

Selecting the right EC ratio depends on your volume size, durability requirements, and infrastructure:

Volume Size Considerations

Important: Total shards (data + parity) must be less than 32.

┌───────────────────────────────────────────────────────────────────┐

│ EC Ratio Selection by Volume Size │

├─────────────────┬──────────────┬──────────────────────────────────┤

│ Volume Size │ Recommended │ Rationale │

├─────────────────┼──────────────┼──────────────────────────────────┤

│ < 10 GB │ 4+1, 6+2 │ Smaller volumes, fewer shards │

│ 10-30 GB │ 10+4, 12+4 │ Balanced (default range) │

│ 30-100 GB │ 16+4, 20+4 │ Cost-optimized for large volumes │

│ > 100 GB │ 20+5, 24+6 │ Maximum efficiency + robustness │

└─────────────────┴──────────────┴──────────────────────────────────┘

Decision Framework

-

For Maximum Cost Savings: Use higher data shard ratios (e.g., 20+4, 24+4)

- Lower overhead (1.2x - 1.25x)

- Best for large, stable deployments

-

For Maximum Durability: Use higher parity shard ratios (e.g., 10+6, 20+5)

- Higher failure tolerance (5-6 shards)

- Best for mission-critical data

-

For Balanced Approach: Use default or moderate ratios (e.g., 10+4, 12+4)

- Good balance of cost and durability

- Best for general enterprise workloads

Constraint: Remember that total shards must be < 32. For example, 30+6 (36 total) would not be valid.

Enterprise Example: Large-Scale Cost Savings

Consider an enterprise with 100 PB of warm storage:

| Configuration | Total Storage Required | Hardware Cost* |

|---|---|---|

| 3x Replication | 300 PB | ~$3,000,000 |

| EC 10+4 (1.4x) | 140 PB | ~$1,400,000 |

| EC 16+4 (1.25x) | 125 PB | ~$1,250,000 |

| EC 20+4 (1.2x) | 120 PB | ~$1,200,000 |

*Estimated at $10/TB for HDD storage

With EC 20+4, enterprises save $1.8M compared to 3x replication while maintaining high durability.

Architecture Deep Dive

┌───────────────────────────────────────────────────────────────────────┐

│ SeaweedFS EC Architecture │

│ │

│ ┌──────────────────────────────────────────────────────────┐ │

│ │ Original Volume (30GB) │ │

│ └──────────────────────────────────────────────────────────┘ │

│ │ │

│ ▼ │

│ ┌──────────────────────────────────────────────────────────┐ │

│ │ Split into 1GB Blocks │ │

│ │ ┌─────┬─────┬─────┬─────┬─────┬──────────────┬─────┐ │ │

│ │ │ B1 │ B2 │ B3 │ B4 │ B5 │ ... │ B30 │ │ │

│ │ └─────┴─────┴─────┴─────┴─────┴──────────────┴─────┘ │ │

│ └──────────────────────────────────────────────────────────┘ │

│ │ │

│ ▼ │

│ ┌──────────────────────────────────────────────────────────┐ │

│ │ Reed-Solomon Encoding (Configurable) │ │

│ │ │ │

│ │ Data Blocks: 10 (or custom: 16, 20, etc.) │ │

│ │ Parity Blocks: 4 (or custom: 6, 8, etc.) │ │

│ │ │ │

│ │ Every 10 data chunks → 4 parity chunks │ │

│ └──────────────────────────────────────────────────────────┘ │

│ │ │

│ ▼ │

│ ┌──────────────────────────────────────────────────────────┐ │

│ │ Distributed Across Servers/Racks │ │

│ │ │ │

│ │ Server 1 Server 2 Server 3 Server 4 │ │

│ │ ┌───────┐ ┌───────┐ ┌───────┐ ┌───────┐ │ │

│ │ │ Shard │ │ Shard │ │ Shard │ │ Shard │ │ │

│ │ │ 1,5 │ │ 2,6 │ │ 3,7 │ │ 4,8 │ │ │

│ │ │ 9,13 │ │ 10,14 │ │ 11 │ │ 12 │ │ │

│ │ └───────┘ └───────┘ └───────┘ └───────┘ │ │

│ └──────────────────────────────────────────────────────────┘ │

│ │

└───────────────────────────────────────────────────────────────────────┘

Key Benefits for Enterprise

- Cost Reduction: Custom ratios like 20+4 reduce storage overhead to 1.2x vs 3x for replication

- Predictable Performance: O(1) reads regardless of shard count

- Flexible Durability: Choose parity level based on failure tolerance requirements

- Rack-Aware Placement: Shards distributed across racks for maximum resilience

- Disk-Aware Placement: Shards distributed across disks within servers (JBOD support)

- Memory Efficient: No index loading required for EC volumes

- Self-Healing Integration: Combines with Enterprise self-healing for complete data protection